• Anthropic has dropped a core AI safety pledge from its Responsible Scaling Policy.

• The original commitment limited model development without guaranteed safeguards.

• Competitive pressure and geopolitical dynamics drove the policy shift.

• The move highlights the gap between stated safety and operational outcomes.

For years, AI safety pledges functioned as a signal.

A signal to regulators.

A signal to enterprise buyers.

A signal to the public.

They communicated intent: that capability growth would not outpace safety readiness.

That alignment mattered as much as advancement.

Anthropic was one of the companies most closely associated with that positioning. Its Responsible Scaling Policy included a flagship commitment — a pledge not to train or release increasingly powerful AI systems unless adequate safety safeguards could be guaranteed in advance.

That pledge is now gone.

The company has revised its policy, removing the unilateral commitment to pause development if safety thresholds could not be met.

The rationale reflects the realities of the modern AI race.

Executives argued that slowing development alone — while competitors continued advancing — would not meaningfully reduce systemic risk.

In other words, restraint without industry alignment was viewed as strategically unsustainable.

So the policy shifted from hard constraints to conditional governance: increased transparency reporting, risk documentation, and threshold-based intervention rather than preemptive development pauses.

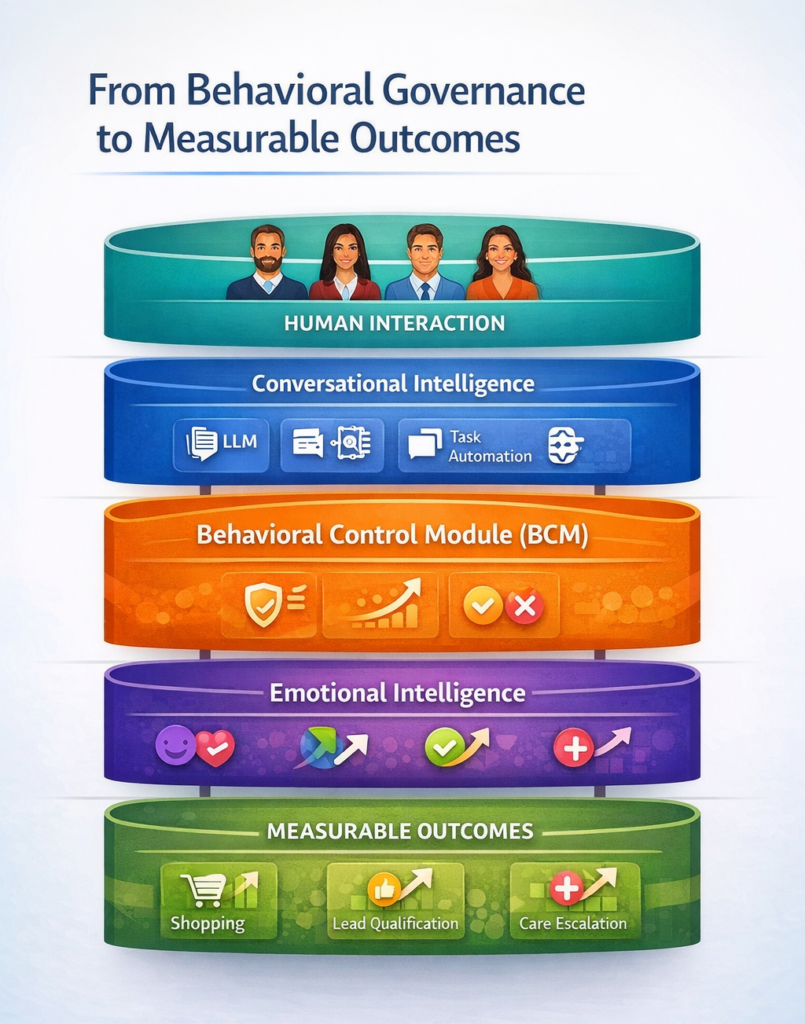

From Stated Safety to Operational Reality

This shift marks more than a policy update.

It reflects a structural tension inside AI deployment:

Capability incentives accelerate.

Safety incentives decentralize.

Public pledges can signal responsibility — but they do not inherently produce controlled outcomes.

Because outcomes are not governed by statements.

They are governed by system architecture.

A company can publish safety frameworks, transparency reports, and scaling policies — yet the operational behavior of deployed AI systems is determined by how they are constrained, monitored, and behaviorally governed in practice.

This is the difference between principle-level safety…

…and interaction-level containment.

The Competitive Pressure Problem

Anthropic’s reasoning underscores a broader industry dynamic.

AI development is no longer occurring in isolated corporate lanes.

It is unfolding inside:

• National competitiveness

• Defense partnerships

• Enterprise adoption races

• Venture capital acceleration

In that environment, unilateral restraint becomes economically and strategically fragile.

Safety becomes collective — but development remains competitive.

That asymmetry creates predictable pressure points.

If one actor slows…

…another accelerates.

And the net system risk may remain unchanged.

Why Outcomes Become the True Safety Measure

This is where the conversation shifts from pledges to performance.

Because AI risk does not materialize at the policy level.

It materializes at the interaction level.

- In customer service escalations.

- In healthcare triage errors.

- In legal misguidance.

- In mental health conversations.

Outcomes — not commitments — determine impact.

Which raises a more operational question:

Not “What safety principles exist?”

But “What behavioral controls are embedded inside deployed systems?”

Governance as Infrastructure, Not Messaging

Safety pledges operate upstream.

They guide research philosophy and development pacing.

But once AI is deployed into human interaction environments, outcome reliability depends on downstream governance mechanisms:

Role containment.

Escalation logic.

Emotional signal detection.

Drift prevention.

Response constraint frameworks.

These controls determine whether systems remain aligned in live environments — regardless of research pledges or corporate positioning.

In this way, safety becomes infrastructural rather than declarative.

The Industry Inflection Point

Anthropic’s policy change does not signal abandonment of safety.

But it does reflect a recalibration — one driven by competitive reality, regulatory gaps, and accelerating capability thresholds.

And it highlights a broader industry transition:

From aspirational safety…to operational governance.

Because in deployed AI systems, the most consequential question is not how safety is promised.

It is how outcomes are controlled.