AI without governance creates risk — especially in regulated and high-trust environments.

Enterprises are shifting from model capability to measurable accountability.

Governance requires real-time emotional detection, behavioral guardrails, and defined escalation logic.

VERN AI provides the control layer that turns AI from conversational to accountable.

Most AI deployments fail at scale because they’re not governable: no risk controls, no measurable outcomes, no enforcement layer.

For the past several years, the dominant question in artificial intelligence has been about capability.

- How advanced is the model?

- How natural does it sound?

- How well does it reason?

Those were valid early questions. But for enterprises operating in regulated, high-trust, or high-liability environments, they are no longer the right ones.

The more important question is this:

Can this system be governed?

Because intelligence without governance does not create advantage. It creates exposure.

Why Capability Alone Is No Longer Enough

Most modern AI deployments rely on large language models that are optimized for fluency and breadth. They are trained to respond plausibly across a wide range of topics. That generality is powerful — but it is also unpredictable.

In low-stakes environments, unpredictability may be tolerable.

In healthcare, financial services, education, behavioral health, or enterprise customer service, it is not.

When AI systems are allowed to generate responses without runtime oversight, several risks emerge:

Emotional escalation instead of de-escalation

Inconsistent tone across similar situations

Unintentional reinforcement of harmful behaviors

Compliance exposure in regulated industries

Reputational damage

Enterprises are increasingly recognizing that disclaimers and policy documents do not solve these problems. Governance must be embedded directly into system behavior.

What Governance Actually Means in AI

Governance is often misunderstood as policy. In practice, governance is operational control.

A governed AI system should be able to:

Detect when emotional volatility is rising

Adjust tone and response strategy accordingly

Trigger predefined intervention pathways

Escalate when thresholds are crossed

Log and measure behavioral outcomes

This requires more than prompt engineering. It requires structured, measurable signals that sit alongside the language model and influence its behavior in real time.

Without that layer, organizations are relying on hope — not control.

The Emerging Divide: Governed vs. Ungoverned Systems

We are beginning to see a clear separation in the market.

On one side are systems optimized for engagement and scale.

On the other are systems engineered for accountability.

The difference is not cosmetic. It is architectural.

Ungoverned systems measure:

Session length

Engagement rates

Click-through metrics

Governed systems measure:

Emotional trajectory

De-escalation success

Intervention effectiveness

Risk mitigation

Compliance alignment

Outcome consistency

As AI becomes more human-like — especially in the form of AI Humans and conversational avatars — expectations shift. If an interface resembles a person, users will judge it by human standards: empathy, responsibility, situational awareness, and restraint.

That standard cannot be met through fluency alone.

Where VERN AI Enters the Conversation

VERN AI was designed to address this precise gap.

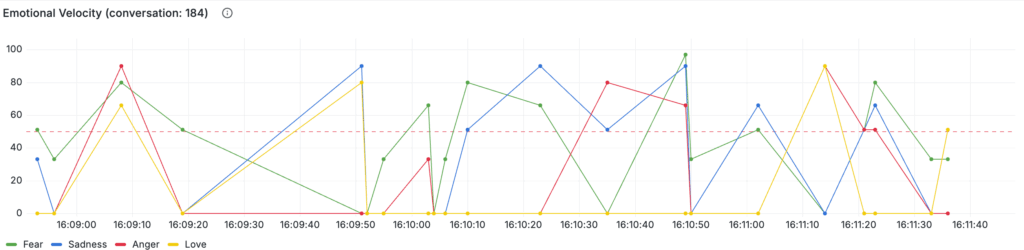

At the sentence level, VERN analyzes emotional signals — anger, sadness, fear, and love/joy — and measures their intensity. This is not sentiment tagging for reporting after the fact. It is structured emotional telemetry that can influence behavior in real time.

That signal becomes a governance layer.

When emotional thresholds are crossed, systems can:

Shift response strategy

Apply behavioral guardrails

Trigger structured de-escalation protocols

Activate escalation workflows

Log measurable outcome data

In other words, emotional awareness becomes enforceable logic.

This transforms conversational AI from reactive text generation into governed interaction.

Governance as a Competitive Advantage

- Proper governance leads to confidence to deploy to the public.

- Engagement with avatars is more human, and increases Time on Site

- Clients in production are seeing metrics rise exponentially.

There is a misconception that governance slows innovation.

In reality, governance accelerates adoption.

- Boards approve governed systems.

- Compliance teams approve governed systems.

- Risk officers approve governed systems.

And most importantly, customers trust governed systems.

As AI moves deeper into sensitive domains — mental health support, financial decision-making, healthcare navigation, enterprise service delivery — governance becomes a prerequisite, not a feature.

Model performance will continue to improve across the industry. Capability will become commoditized.

What will not be commoditized is control.

The Future of AI Deployment

The organizations that succeed in this next phase will not be those with the most impressive demos.

They will be those who can answer, clearly and defensibly:

How is this system constrained?

How does it detect risk?

What happens when emotional volatility increases?

How are outcomes measured?

Where does accountability live?

AI capability opened the door.

Governance determines whether enterprises walk through it.

And in this next era of deployment, governed intelligence will define the difference between experimentation and sustainable advantage.