AI is getting faces. Engagement is up. Risk is up.

The real problem isn’t intelligence — it’s uncontrolled behavior.

Conversational drift kills enterprise adoption.

Guardrails block failure. They don’t drive success.

Behavioral governance is the missing layer.

Emotion is a performance signal.

SXSW 2026: AI moves from interface hype to governed outcomes.

In last week’s piece, we wrote about the interface shift currently reshaping artificial intelligence — the move from text to presence, and from presence to fully realized AI Humans. That transition will be on full display at SXSW 2026. Across industries, organizations are moving quickly to humanize AI, placing avatars, voices, and faces at the center of customer and patient interactions.

But the emergence of AI Humans introduces a second-order challenge that is receiving far less attention — and it is one that will ultimately determine whether these systems succeed or fail in enterprise environments.

Once AI becomes human-facing, its behavior matters as much as its intelligence.

The industry has spent the last two years solving for fluency: making models more conversational, more responsive, and more natural in tone. Yet fluency alone does not produce business value. A system can sound credible while still driving poor outcomes — escalating frustrated customers, mishandling emotionally sensitive interactions, or drifting away from defined objectives.

As AI takes on more human-like interfaces, the gap between conversational capability and behavioral reliability becomes increasingly visible.

The Engagement Breakthrough — and Its Limits

Human interfaces undeniably improve interaction metrics. Organizations deploying AI avatars and conversational agents consistently report increases in engagement time, user satisfaction, and perceived accessibility. Faces capture attention in ways text never could. Voice conveys nuance that static chat cannot.

However, these engagement gains can mask deeper operational risk.

A human-like interface raises user expectations. People instinctively project emotional intelligence, judgment, and situational awareness onto anything that looks or sounds human. When those expectations are not met, the disappointment is sharper — and the consequences more severe — than with traditional chatbots.

In other words, anthropomorphism amplifies both upside and downside.

A friendly avatar that mishandles a distressed patient interaction does more reputational damage than a text bot making the same mistake. The interface magnifies the behavioral failure.

Conversational Drift in Enterprise Systems

One of the most persistent barriers to enterprise AI adoption is conversational drift — the gradual movement away from intended goals during an interaction.

Drift manifests in multiple ways:

Responses become informational when emotional support is required.

Tone becomes overly clinical in sensitive contexts.

Conversations wander into irrelevant detail.

Escalation signals go unnoticed.

Compliance boundaries blur.

This is not typically a failure of model knowledge. Large language models are extraordinarily capable from an informational standpoint. Drift occurs because the system lacks situational governance — an ability to continuously interpret context, emotional state, and conversational trajectory, and adjust behavior accordingly.

Without that governance layer, interactions remain directionally unguided.

Why Guardrails Are Not Enough

Many AI deployments attempt to address behavioral risk through guardrails: prompt engineering, content filters, or restricted response frameworks. While necessary, these mechanisms are fundamentally preventative rather than directive.

Guardrails stop specific categories of failure. They do not actively steer conversations toward success.

An analogy is useful here. Guardrails on a highway prevent a car from leaving the road, but they do not ensure the driver reaches the correct destination. Enterprises require systems that do both: prevent harmful deviation and maintain progress toward defined objectives.

This distinction becomes critical in emotionally charged or outcome-sensitive environments such as healthcare navigation, financial advisory interactions, or customer retention scenarios.

The Emergence of the Behavioral Control Layer

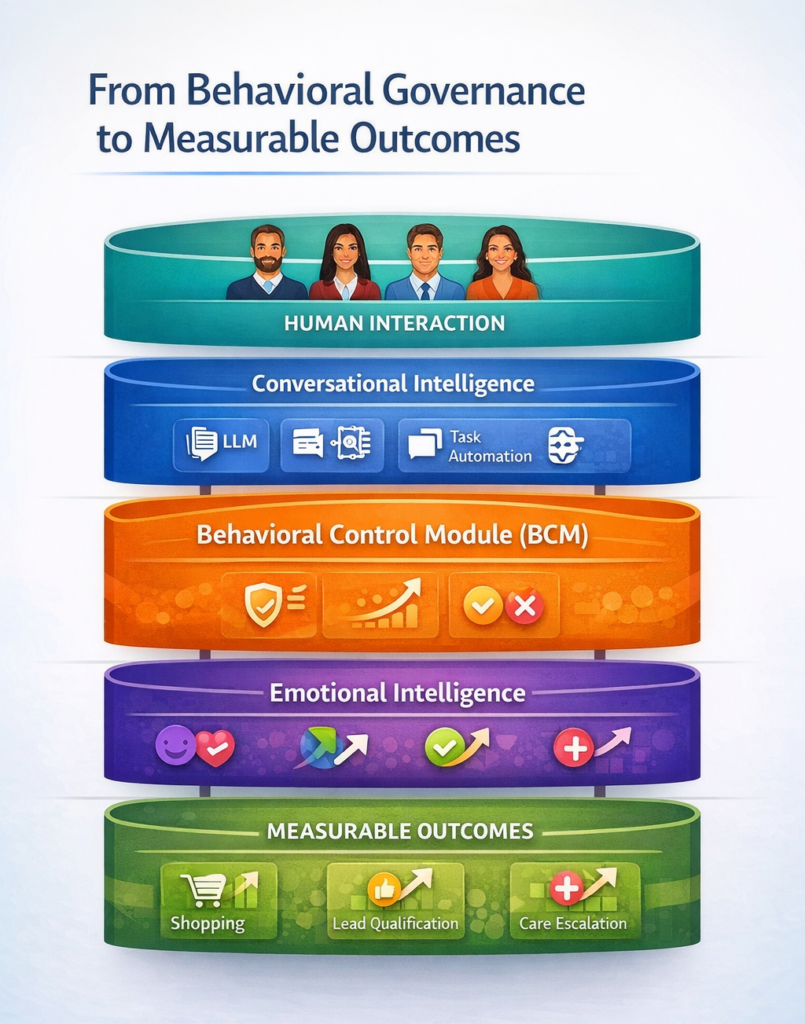

To address this gap, a new architectural layer is beginning to take shape — one focused not on what AI says, but on how it behaves while saying it.

This behavioral control layer operates during the interaction itself. It continuously evaluates signals that traditional conversational AI systems ignore:

Emotional tone

Conversational momentum

Frustration escalation

Trust indicators

Intent shifts

When misalignment is detected, the system intervenes in real time — adjusting tone, redirecting conversational pathways, or reinforcing objective alignment.

The result is not merely safer AI, but more operationally effective AI.

Emotion as an Operational Signal

Emotion is often misunderstood in AI discourse as a cosmetic enhancement — something that improves user experience but remains peripheral to performance.

In reality, emotional state is one of the most reliable predictors of conversational outcome.

A frustrated customer has a dramatically lower likelihood of conversion.

An anxious patient requires reassurance before information becomes actionable.

A confused user disengages long before a knowledge gap is resolved.

When AI systems treat all interactions as informational exchanges, they miss the emotional determinants that drive real-world results.

Embedding emotional detection into conversational governance allows systems to respond proportionally — de-escalating when necessary, reinforcing trust when possible, and accelerating decision-making when confidence is high.

From Behavioral Governance to Outcome Measurement

Once behavior can be directed, performance metrics evolve.

Traditional AI KPIs focus on efficiency:

Response accuracy

Resolution time

Cost per interaction

Behaviorally governed systems introduce outcome-linked metrics:

Emotional de-escalation rates

Trust recovery indicators

Conversion influence

Retention stabilization

These measurements connect conversational conduct directly to business performance — a linkage that has historically been difficult to quantify.

Why This Matters Now

SXSW has long served as a proving ground for interface innovation. What makes 2026 distinct is that the interface conversation is maturing. The novelty of AI faces and voices is giving way to scrutiny around reliability, governance, and enterprise readiness.

Organizations are beginning to recognize that humanizing AI without governing it introduces new categories of risk. Brand reputation, compliance exposure, and customer trust now sit downstream of AI behavioral consistency.

The industry is moving from a design problem to an operational one.

What We’re Demonstrating in Austin

At SXSW, the focus will not simply be on AI Humans as an interface evolution. It will be on the infrastructure required to make those interfaces enterprise-grade.

This includes:

Real-time emotional signal detection

Behavioral adjustment during live interactions

Conversational objective enforcement

Performance measurement tied to business outcomes

The demonstration is less about what AI looks like and more about how it performs under governance.

The Next Phase of AI Adoption

Human interfaces represent a meaningful leap forward in accessibility and engagement. But they are only the visible layer of a broader transformation.

As AI becomes more human in presentation, it must also become more accountable in operation.

The organizations that succeed in deploying AI at scale will be those that treat behavioral governance not as an enhancement, but as core infrastructure — the connective tissue between conversational capability and measurable enterprise value.

SXSW 2026 will highlight that transition point: the moment when the conversation moves beyond what AI can say, and toward how reliably it can perform.