• AI outcomes don’t fail because of intelligence gaps — they fail because of behavioral inconsistency.

• Enterprises need governance inside the interaction, not just moderation outside it.

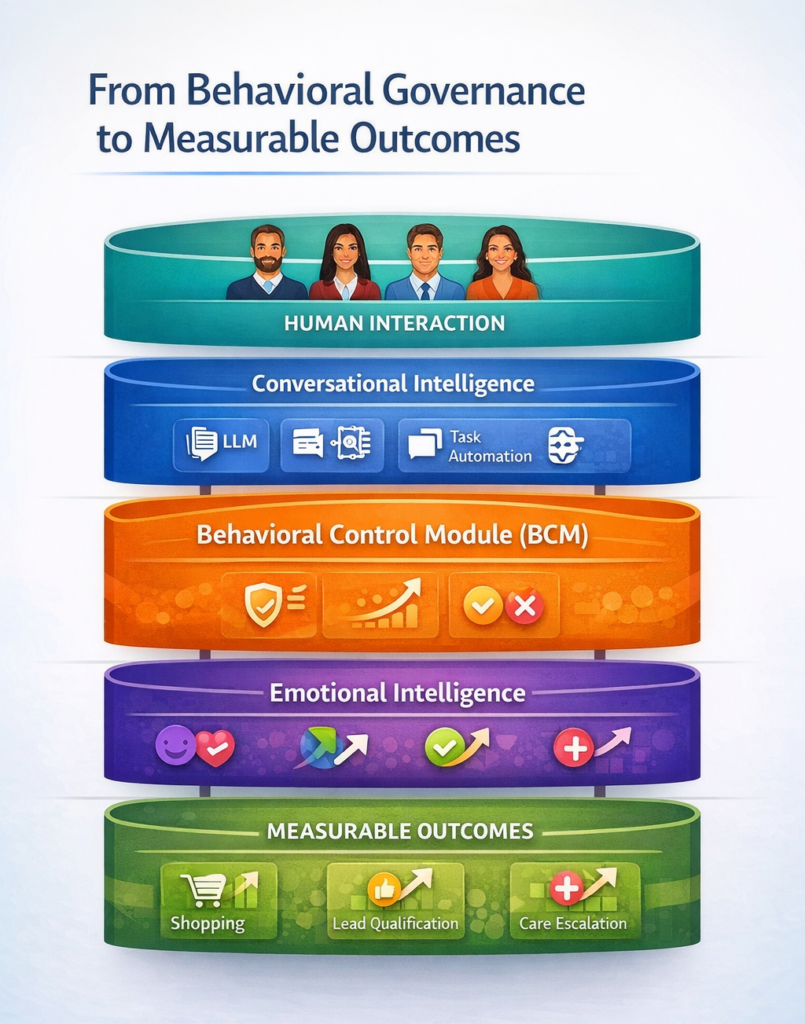

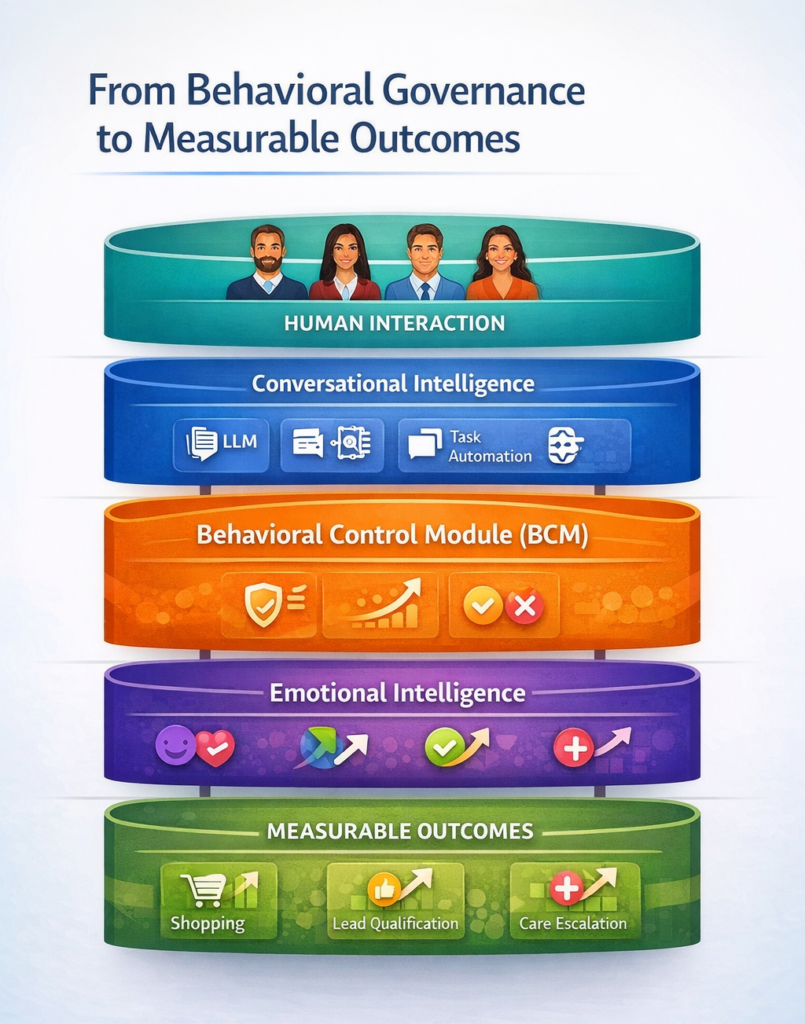

• A Behavioral Control Module (BCM)™ regulates how AI behaves under pressure.

• Behavioral containment is what makes Outcomes-as-a-Service measurable and reliable.

Artificial intelligence has advanced rapidly in capability.

Systems can summarize documents, simulate advisors, generate code, and converse fluently across domains. From a technical perspective, the progress is undeniable.

And yet enterprise adoption — particularly in high-consequence environments — continues to lag behind investment.

The barrier is not intelligence: It is behavioral reliability.

Because enterprises do not deploy AI to generate language, they deploy AI to produce outcomes.

- Conversion outcomes.

- Retention outcomes.

- Intake outcomes.

- De-escalation outcomes.

- Revenue outcomes.

And outcomes depend not only on what AI says — but on how it behaves inside interaction.

Where Outcomes Break Down

Most generative systems are optimized for conversational breadth.

They are designed to continue dialogue plausibly, expansively, and flexibly across topics. But outcome environments are not open-ended.

They are operationally defined.

- A legal intake AI must qualify viable cases.

- A healthcare assistant must escalate risk.

- A retail avatar must guide purchase decisions.

- A founder coach must improve pitch clarity.

If the system improvises outside its lane — even slightly — outcomes degrade.

Conversion drops.

Trust erodes.

Escalations fail.

Leads misqualify.

Not because the AI lacked knowledge…but because its behavior was not governed toward the intended result.

The Behavioral Control Module

This is where the Behavioral Control Module (BCM)™ becomes outcome-critical infrastructure.

A BCM™ is an embedded governance layer that regulates how a conversational AI system behaves in real time — shaping interaction patterns to align with defined performance objectives.

It governs:

• Role adherence — staying within operational mandate

• Emotional calibration — adjusting tone to influence trust and receptivity

• Escalation timing — knowing when human intervention is required

• Reinforcement logic — avoiding validation of harmful or unproductive states

• Conversational containment — preventing drift outside outcome pathways

In effect, it turns conversation into guided performance architecture.

The system is no longer simply responding: It is operating toward a result.

Why Behavioral Governance Produces Measurable Outcomes

Outcomes require consistency.

Consistency requires containment.

Containment requires governance.

Without behavioral control, conversational AI behaves variably — producing different engagement patterns, emotional impacts, and decision pathways across users.

That variability makes outcome attribution impossible.

You cannot tie revenue lift to an interaction model that behaves differently each time.

A BCM™ standardizes behavioral response patterns in ways that align with defined success metrics.

For example:

- In retail environments, emotional calibration influences purchase confidence.

- In legal intake, role containment ensures only qualified leads progress.

- In founder coaching, reinforcement logic builds delivery confidence over time.

- In mental health triage, escalation governance ensures risk signals are not missed.

Because behavioral pathways are regulated, performance becomes repeatable.

And repeatability is what makes outcomes measurable.

From Conversational AI to Outcome Infrastructure

This is the architectural shift underlying Outcomes-as-a-Service.

Traditional AI deployments sell access:

- Access to a chatbot.

- Access to an avatar.

- Access to automation.

But access does not produce ROI.

Outcomes do.

When a Behavioral Control Module™ governs interaction, the AI system operates less like software and more like performance infrastructure — engineered to influence specific business variables.

- Conversion rates.

- Intake completion.

- Customer trust recovery.

- Fundraising readiness.

- Lead qualification efficiency.

The BCM™ is what allows those outcomes to be tracked, improved, and operationalized over time.

Because it ensures the system behaves in ways that drive them.

The Economic Implication

This has commercial consequences:

If AI behavior is unbounded, vendors sell capability.

If AI behavior is governed, vendors can stand behind performance.

Not as guarantees…but as engineered probability lift.

The conversation shifts from:

“What can the system do?”

To:

“What does the system reliably produce?”

That is the foundation of Outcomes-as-a-Service.

And behavioral governance is what makes the model viable.

The Future Standard

As enterprises mature in AI adoption, behavioral containment will become as expected as cybersecurity or data encryption.

Because intelligence without governance produces variability.

Variability undermines outcomes.

And outcomes are the only metric enterprises ultimately fund.

The Behavioral Control Module™ represents the layer where conversational capability becomes operational performance.

Where interaction becomes infrastructure.

Where AI stops being measured by what it generates…and starts being measured by what it reliably improves.